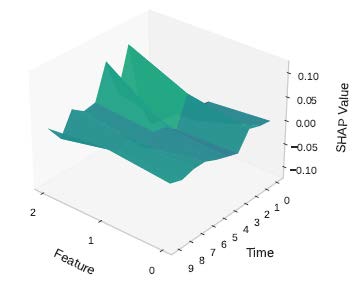

Explainability is critical for the adoption of machine learning models in industrial systems, particularly for failure detection and prediction. This thesis explores the application of SHapley Additive exPlanations (SHAP) to time-series data containing failure events, focusing on attributing model decisions to both relevant features and critical time steps to improve transparency and trust.

Requirements

- Basic knowledge of Ubuntu / ROS2

- Python / C++

- Git

Please find more details in the following link:

- File Name

- SHAP4time

- File Size

- 577 KB

- File Type

Contact person:

M.Sc Yongxu Ren yongxu.ren@fau.de